Dissecting Israel’s AI War Machine: Not Targeting, but Mass Destruction

Dissecting Israel’s AI War Machine: Not Targeting, but Mass Destruction

* The massacre that Israel carried out in the Gaza war was a monstrous act of artificial intelligence.

It was not humans who determined who would be killed, where and how. The world has never seen such a brutal end to humanity at the hands of a mechanical system.

* Evidence suggests that there is a systematic effort to kill Palestinians—where algorithms are being used not to avoid civilian casualties, but to ensure them on a large scale.

* The main reason behind this is the emphasis on speed over verification. When the rate of false identification exceeds a certain threshold, mass deaths become inevitable.

* Statistically, the Lavender system has created 37,000 targets. The operators have only 20 seconds to verify each target. If there is a 5 percent misidentification rate, 2,000 people could be killed by mistake.

There are pre-set quotas of 20 civilians for each ordinary fighter and 100 for each commander. These are essentially algorithmic authorizations for mass killing.

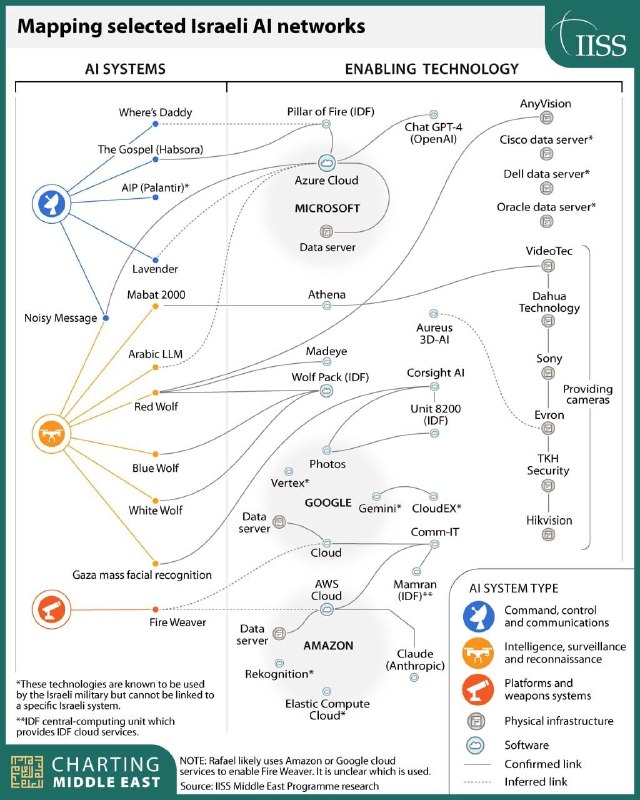

* Habsora has automated the task of targeting—where previously humans would have identified 50 targets a year, now AI is identifying 100 targets a day. An AI called Where’s Daddy? tracks suspects to their family homes. Lavender phones assign a ‘risk score’ to every Gazan user.

* These systems are being powered by American tech giants. Project Nimbus—a $1.2 billion deal between Google and Amazon—is providing facial recognition through cloud servers and a secret “blink mechanism.” Microsoft’s Azure is storing 13.6 petabytes of Palestinian wiretapped phone calls.

* Palantir has integrated surveillance systems with real-time kill dashboards. Its CEO was in Tel Aviv for a board meeting while Gaza was burning. The Israeli military is currently training an Arabic large language model on a commercial cloud.

* Israel can’t maintain speed and accuracy at the same time. AI models often hallucinate and harbor human biases. When an algorithm kills a child, no one takes responsibility. The result is an unaccountable extermination process.

* Yes, Hamas leaders have been killed as fighters. But so have more than 70,000 Palestinians—70 percent of them women and children. The ratio of civilian to combatant deaths is almost 5:1, a gross violation of the proportionality principle of the laws of war. This is algorithmic murder disguised as war.

* The question is: When the list of this AI’s mistakes is finally made public, how many more innocent names will have to be added before the world recognizes it as a machine for the extermination of a nation?

Source: New Rules Geo

One Comment